HPE today revealed a significant upgrade to its Ezmeral software application platform, which formerly consisted of over a lots parts today consists of simply 2, consisting of the Ezmeral Data Material that offers edge-to-cloud information management from a single pane of glass, in addition to Unified Analytics, a brand-new offering that it states will enhance consumer access to top open source analytics and ML structures without supplier lock-in.

HPE Ezmeral Data Material is the versatile information material offering that it got from MapR back in 2019. It supports an S3-compaible things shop, a Kafka-compatible streams using, and a Posix-compatible file storage layer, all within a single worldwide namespace (with vectors and chart storage in the works). Assistance for Apache Iceberg and Databricks Delta table formats assist to keep information constant.

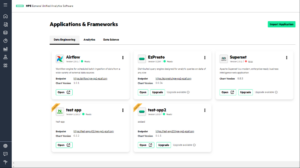

HPE upgraded its information material with a brand-new SaaS shipment alternative, a brand-new interface, and more exact controls and automated policy management. However perhaps a larger development lies with Ezmeral Unified Analytics, which brings out-of-the-box implementation abilities for a variety of open source structures, consisting of Apache Air flow, Apache Glow, Apache Superset, Banquet, Kubeflow, MLFlow, Presto SQL and Ray.

Clients can release these structures atop their information without stressing over security hardening, information combination problems, being stuck on forked jobs, or supplier lock-in, states Mohan Rajagopalan, vice president and basic supervisor of HPE Ezmeral Software Application.

” Our consumers get access to the most recent, biggest open source with no supplier lock-in,” states Rajagopalan, who signed up with HPE about a year earlier. “They get all the business grade guardrails that HPE can provide in regards to assistance and services, however no supplier lock-in.”

The open source neighborhood moves rapidly, with regular updates that include brand-new functions and performance, Rajagopalan states. Nevertheless, open source users do not always deal with eagerness to guarantee their applications provide enterprise-grade security and compliance, he states. HPE will use up the mantle to guarantee that work gets done on behalf of its consumers.

HPE Ezmeral Unified Analytics provides users access to top open source analytic and ML structures (Image source: HPE)

” Patches, security updates, and so on are all provided to our consumers. They do not need to fret about it. They can merely utilize the tools. We keep the tools evergreen,” he states. “We make certain that all the tools can adhere to the business or the business’s security postures. So consider OIDC, single sign-on, etcetera. We look after all the pipes.”

More notably, he states, HPE will guarantee that the tools all play well together. Take Kubeflow and MLFlow, for instance.

” Kubeflow I believe is the best-of-breed MLOps ability I have actually seen in the market today,” Rajagopalan states. “MLFlow I believe has the very best design management abilities. Nevertheless, getting Kubeflow and MLFlow to interact is absolutely nothing except pulling teeth.”

HPE has actually devoted to guaranteeing this compatibility without presenting any significant code modifications to the open source tools or forking the jobs. That is necessary for HPE consumers, Rajagopalan states, since they wish to have the ability to move their applications quickly, consisting of running them on any cloud, the edge, and on-prem.

” We do not wish to require our consumers to pick the whole stack,” the software application GM states. “We wish to satisfy them where they’re comfy and where their discomfort points are too.”

Asked if this is a re-run of the business Hadoop design– where suppliers like Cloudera, Hortonworks, and MapR looked for to develop and keep in synch big collections of open source jobs (Hive, HDFS, HBase, and so on)– Rajagopalan stated it was not. “I believe this is finding out, stepping on the shoulders of giants,” he states. “Nevertheless, I believe that method was flawed.”

There are necessary technical distinctions. For beginners, HPE is accepting the separation of calculate and storage, which ultimately ended up being a significant sticking point in the Hadoop video game and which eventually resulted in the death of HDFS and YARN in favor of S3 things storage and Kubernetes. HPE’s Ezmeral Data Material and Unified Analytics still supports HDFS (it’s being deprioritized), however today’s target is S3 (and Kafka and Posix file systems) storage combined with containers managed by Kubernetes.

” Whatever that exists in Unified Analytics is a Kubernatized app of Glow or Kubeflow or MLFlow  or whatever structure we consisted of there,” Rajagopalan states. “The blast radius for each app is simply its own container. Nevertheless, behind the scenes, we have actually likewise developed a lot of pipes such that the apps can in some sense interact with one another, at the very same time we likewise made it simple for us to change or update apps in location without interrupting any of the other apps.”

or whatever structure we consisted of there,” Rajagopalan states. “The blast radius for each app is simply its own container. Nevertheless, behind the scenes, we have actually likewise developed a lot of pipes such that the apps can in some sense interact with one another, at the very same time we likewise made it simple for us to change or update apps in location without interrupting any of the other apps.”

Nevertheless, simply rubbing some Kubernetes on a pail of open source bits does not make it enterprise-grade. Business should pay to get Unified Analytics, and they’re spending for HPE’s proficiency in guaranteeing that these applications can cohabitate without triggering a commotion.

” I do not believe Kubernetes is the secret sauce for keeping whatever in synch,” Rajagopalan states. “I do not believe there is any magic box. I believe we have a great deal of experience in handling these tools. That’s what is the magic sauce.”

Assistance for Apache Iceberg and Databricks’ Delta Lake formats will likewise contribute in assisting HPE to do what the Hadoop neighborhood eventually stopped working at– keeping a circulation of a a great deal of continuously progressing open source structures without triggering lock-in.

” It’s big,” Rajagopalan stated of the existence of Iceberg in Unified Analytics. Iceberg has actually been embraced by Snowflake and other cloud information storage facility suppliers, like Databricks, that HPE is supplying information ports to. Snowflake is popular amongst HPE consumers who wish to do BI and reporting, while Databricks’ and its Delta Lake format is popular amongst consumers doing AI and ML work, he states.

” What we attempt to do is we attempt to supply the best sets of abstractions and here we’re leaning on open requirements. We do not wish to develop our own bespoke abstractions,” Rajagopalan states. “Today, I would like for HP to be Switzerland, which is we attempt to supply the very best of choices to our consumers. As the marketplace develops, we might take a more opinionated position. However I believe there suffices momentum in each of these areas where it’s difficult for us to choose one over the other.”

Associated Products:

HPE Includes Lakehouse to GreenLake, Targets Databricks

HPE Reveals ‘Ezmeral’ Platform for Next-Gen Apps

Information Fit Together Vs. Data Material: Comprehending the Distinctions

containers, information material, Kafka, Kubeflow, Kubernetes, magic box, magic sauce, MLflow, open source, presto, Ray, secret sauce, Glow