While the very first systems based upon Intel’s upcoming Meteor Lake (14 th Gen Core) systems are still a minimum of a couple of months out– and therefore simply a bit too far out to display at Computex– Intel is currently preparing for Meteor Lake’s upcoming launch. For this year’s program, in what’s extremely rapidly end up being an AI-centric occasion, Intel is utilizing Computex to set out their vision of client-side AI reasoning for the next generation of systems. This consists of both some brand-new disclosures about the AI processing hardware that will remain in intel’s Meteor Lake hardware, along with what Intel anticipates OSes and software application designers are going to make with the brand-new abilities.

AI, obviously, has rapidly end up being the personnel buzzword of the innovation market over the last numerous months, specifically following the general public intro of ChatGPT and the surge of interest in what’s now being called “Generative AI”. So like the early adoption phases of other significant brand-new calculate innovations, software and hardware suppliers alike are still in the procedure of determining what can be made with this brand-new innovation, and what are the very best hardware styles to power it. And behind all of that … let’s simply state there’s a great deal of prospective profits waiting in the wings for those business that prosper in this brand-new AI race.

Intel for its part is no complete stranger to AI hardware, though it’s definitely not a field that generally gets prominence at a business best understood for its CPUs and fabs (and because order). Intel’s stable of wholly-owned subsidiaries in this area consists of Movidius, who makes low power vision processing systems (VPUs), and Habana Labs, accountable for the Gaudi household of high-end deep knowing accelerators. However even within Intel’s rank-and-file customer items, the business has actually been consisting of some extremely fundamental, ultra-low-power AI-adjacent hardware in the type of their Gaussian & & Neural Accelerator (GNA) block for audio processing, which has actually remained in the Core household considering that the Ice Lake architecture.

Still, in 2023 the winds are plainly blowing in the instructions of including much more AI hardware at every level, from the customer to the server. So for Computex Intel is revealing a bit more on their AI efforts for Meteor Lake.

Meteor Lake: SoC Tile Consists Of a Movidius-derived VPU For Low-Power AI Reasoning

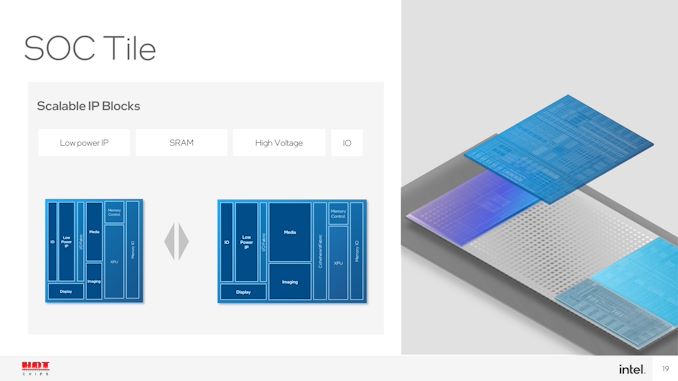

On the hardware side of matters, the huge disclosure from Intel is that, as we have actually long thought, Intel is baking some more effective AI hardware into the disaggregated SoC. Formerly recorded in some Intel discussions as the “XPU” block within Meteor Lake’s SoC tile (middle tile), Intel is now verifying that this XPU is a complete AI velocity block.

Particularly, the block is originated from Movidius’s third-generation Vision Processing System (VPU) style, and moving forward, is appropriately being recognized by Intel as a VPU.

The quantity of technical information Intel is providing on the VPU block for Computex is restricted– we do not have any efficiency figures or a concept of just how much of the SoC tile’s die area it inhabits. However Movidius’s latest VPU, the Myriad X, included a relatively versatile neural calculate engine that’s been accountable for providing the VPU its neural network abilities. The engine on the Myriad X is ranked for 1 TOPS of throughput, however at nearly 6 years and numerous procedure nodes later on, Intel is probably intending far greater for Meteor Lake.

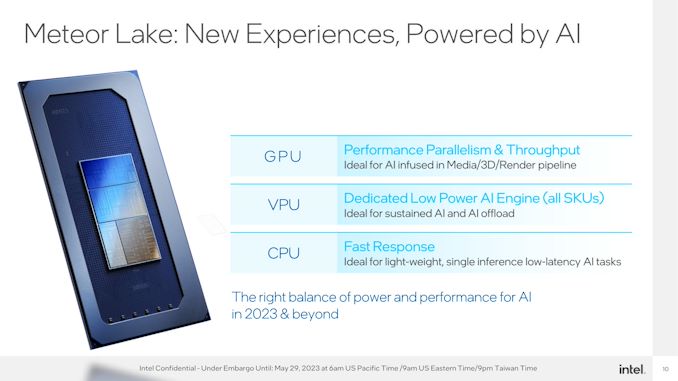

As it belongs to the Meteor Lake SoC tile, the VPU will exist in all Meteor Lake SKUs. Intel will not be utilizing it as a function differentiator, ala ECC and even incorporated graphics; so it will be a standard function readily available to all Meteor Lake-based parts.

The function of the VPU, in turn, is to offer a 3rd rail choice for AI processing. For high-performance requirements there is the incorporated GPU, whose large range of ALUs can offer reasonably generous quantities of processing for the matrix mathematics behind neural networks. On the other hand the CPU will stay the processor of option for basic, low-latency work that either can’t manage to wait on the VPU to be initialized, or where the size of the work does not validate the effort. That leaves the VPU in the middle, as a devoted however low-power AI accelerator to be utilized for continual AI work that do not require the efficiency (and the power hit) of the GPU.

It’s likewise worth keeping in mind that, while not clearly in Intel’s diagrams, the GNA block will likewise stay for Meteor Lake. It’s speciality is ultra-low-power operation, so it is still required for things like wake-on-voice, and compatibility with existing GNA-enabled software application.

Past that, there’s a lot left we do not understand about the Meteor Lake VPU. The truth that it’s even called a VPU and consists of Movidius tech suggests that it’s a style concentrated on computer system vision, comparable to Movidius’s discrete VPUs. If that holds true, then the Meteor Lake VPU might stand out at processing visual work, however do not have efficiency and versatility in other locations. And while today’s disclosure from Intel disclosure rapidly evokes concerns about how this block will compare in efficiency and performance to AMD’s Xilinx-derived Ryzen AI block, those are concerns that will need to wait on another day.

In the meantime, a minimum of, Intel feels that they are well placed to lead the AI improvement in the customer area. And they desire the world– designers and users alike– to understand.

The Software Application Side: What to Do With AI?

As kept in mind in the intro, hardware is just half of the formula when it concerns AI sped up software application. Much more essential than what to run it on is what to do with it, which is something that Intel and its software application partners are still dealing with.

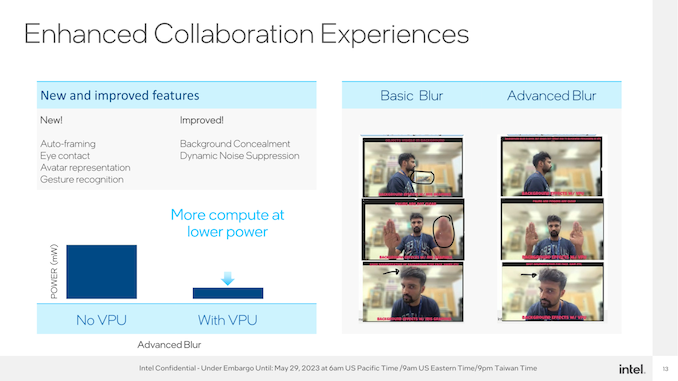

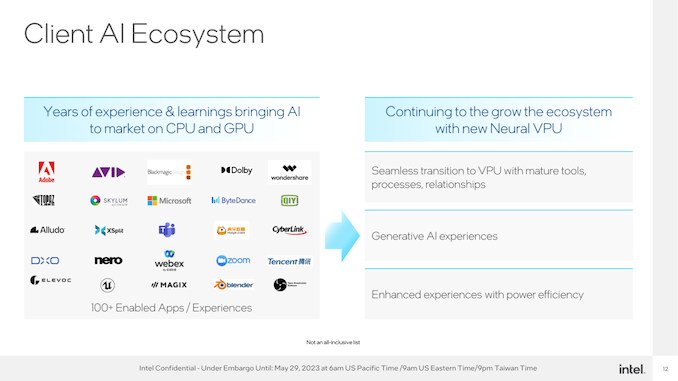

At a many fundamental level, consisting of a VPU supplies extra, energy-efficient efficiency for performing jobs that are currently more-or-less AI driven today on some platforms, such as vibrant sound suppression and background division. In that regard, the addition of a VPU is overtaking smartphone-class SoCs, where things like Apple’s Neural Engine and Qualcomm’s Hexagon NPU supplies comparable velocity today.

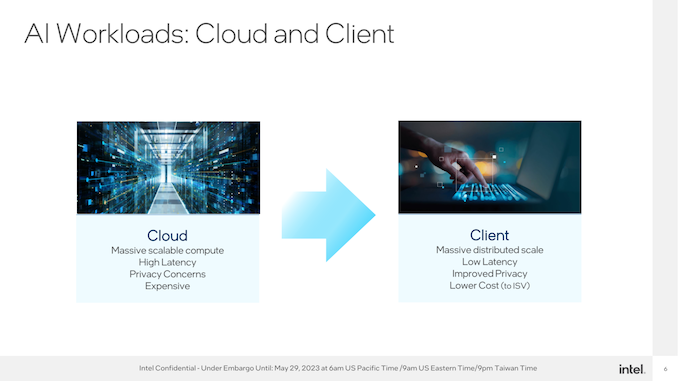

However Intel has their eyes on a much bigger reward. They both wish to promote moving what are presently server AI work to the edge– to put it simply, moving AI processing to the customer– along with cultivating completely brand-new AI work.

What those are, at this moment, stays to be seen. Microsoft set out a few of its own concepts recently at their yearly Build conference, consisting of the statement of a copilot function for Windows 11 And the OS supplier is likewise laying some foundation for designers with their Open Neural Network Exchange (ONNX) runtime.

To some degree, the whole tech world is at a point where it has a brand-new hammer, and now whatever is beginning to look a lot like a nail. Intel, for it’s part, is definitely not gotten rid of from that, as even today’s disclosure is more aspirational than it has to do with particular advantages on the software application side of matters. However these genuinely are the early days for AI, and nobody has a great feel for what can or can not be done. Definitely, there are a couple of nails that can be hammered.

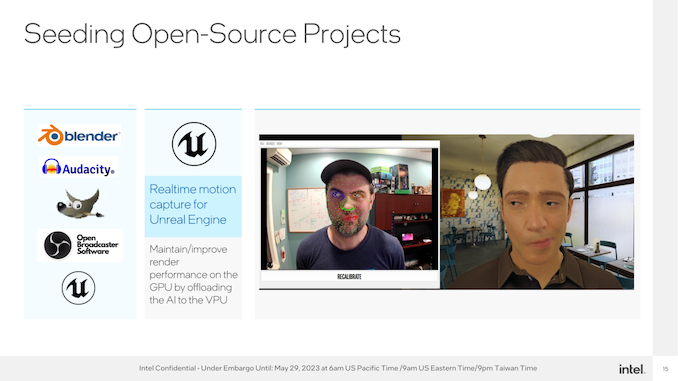

To that end, Intel is aiming to promote a “constructed it and they will come” community for AI in the PC area. Supply the hardware throughout the board, deal with Microsoft to offer the software application tools and APIs required to utilize the hardware, and see what brand-new experiences designers can develop– or additionally, what work they can move on to the VPU to minimize power use.

Eventually, Intel is anticipating that AI-based work are going to change the PC user experience. And whether that completely occurs, it suffices to necessitate the addition of hardware for the job in their next-generation of CPUs.